Add Automated Data and Logic Tests¶

Run tests regularly to catch problems with data, bugs in new code, and regressions in existing code.

If you make a change to an analytic pipeline, how do you know that you did not break anything? Between the anxiety and the phone calls at odd hours, a data analytics professional might not sleep well when changes are made to a business-critical system. A robust set of automated tests that check both data and code can prevent IT emergencies.

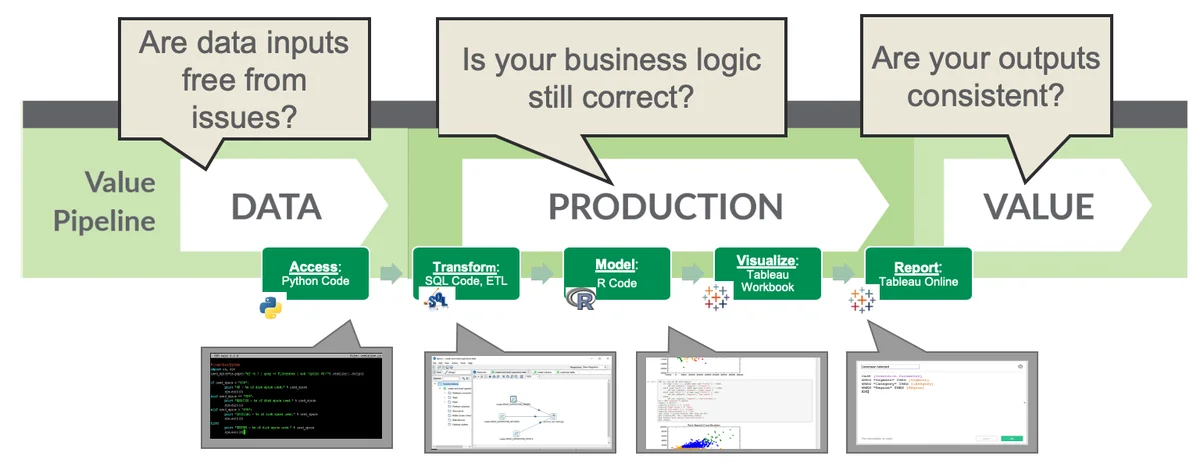

Test throughput in the data analytic pipeline¶

Automated testing insures that a feature release is of high quality without requiring time-consuming, manual testing. The idea in DataOps is that every time a data analytics team member makes a change, they add a test for that change. There are two categories of tests:

- Logic tests: cover the code in a data pipeline.

- Data tests: cover the data as it flows by in production.

Testing is expanded incrementally, with the addition of each feature, so testing gradually improves and quality is literally built in. In a big run, there could be hundreds of tests at each stage in the pipeline. Every time a release is deployed to users, the tests are run to validate the functionality of the release. Each one of those tests is an insurance policy against critical failures. This is bound to improve the mental well-being of the data analytics professional.

Adding tests in data analytics is analogous to the statistical process controls that are implemented in a lean manufacturing operations flow. Tests insure the integrity of the final output by verifying that work-in-progress (the result of intermediate steps in the pipeline) matches expectations. Testing can be applied to data, models, and logic. The table below shows examples of tests in the data analytics pipeline:

| Inputs | Business logic | Outputs |

|---|---|---|

|

Verifying the inputs to an analytics processing stage

|

Checking that the data matches business assumptions

|

Checking the results of an operation, for example, a cross-product join

|

Apply tests to inputs, business logic, and outputs¶

At least one test should be implemented for every step in the pipeline. The DataOps philosophy is to start with simple tests and grow over time. Even a simple test will eventually catch an error before it is released to the users. For example, just making sure that row counts are consistent throughout the process can be very powerful. One could easily make a mistake on a join, and make a cross-product, which fails to execute correctly. A simple row-count test would quickly catch that.

Tests can detect warnings in addition to errors. A warning might be triggered if data exceeds certain boundaries. For example, the number of customer transactions in a week may be OK if it is within 90% of its historical average. If the transaction level exceeds that, then a warning could be flagged. This might not be an error. It could be a seasonal occurrence for example, but the reason would require investigation. Once recognized and understood, the users of the data could be alerted. Warnings can be a powerful business tool that helps the company understand its business better.

DataOps is not about being perfect. In fact, it acknowledges that code is imperfect. It's natural that a data analytics team will make the best effort, yet still miss something. If so, they can determine the cause of the issue and add a test so that it never happens again. In a rapid-release environment, a fix can quickly propagate out to the users.

With a suite of tests in place, DataOps allows you to move fast because you can make changes and quickly rerun the test suite. If the changes pass the tests, then the data analytics team member can be confident and release them. Knowledge is thus built into the system and the process stays under control. Tests catch potential errors and warnings before they are released so the quality remains high. When quality is ensured, the data analytics team can operate faster, with a high degree of confidence.