Orchestrate Production¶

Coordinate your teams, tools, technologies, workflows, and tests across your data organization into a seamless DataOps factory.

Many data professionals spend the majority of their time on inefficient manual processes and fire drills. They could do amazing things if not held back by inefficient processes. An organization will not surpass its competitors and overcome obstacles while its highly trained data scientists are hand-editing CSV files and spending 85% of their time addressing production errors. The data team can free itself from the inefficient and monotonous parts of the data job using an automation technology called orchestration.

Think of your analytics development and data operations workflows as a series of steps that can be represented by a set of directed acyclic graphs (DAGs). Each node in the DAG represents a step in your process: for example, data access, data cleaning, transforms, models, visualizations, reports, or even cloud infrastructure provisioning. You may execute these steps manually today. An orchestration tool runs a sequence of steps for you under automated control. You need only schedule a job for the orchestration tool to progress through the series of steps that you have defined—steps can be run serially, in parallel, or conditionally.

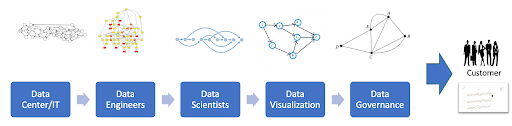

In most enterprises, the production pipeline is not one DAG; it is actually a DAG of DAGs. It's not even a single pipeline; it is a myriad of pipelines flowing in every direction! And it's certainly not a single team; it is many teams with many inherent roles and functions. The figure below shows various groups within the organization delivering value to customers. Each group uses its preferred tools, languages, and workflows. They work independently yet depend on each other. The toolchain may include one or many orchestration tools. DataOps unifies all of these elements through meta-orchestration – orchestrating the DAG of DAGs.

DataOps orchestrates the DAG of DAGs that is your data pipeline.

Although data integration tools play an important role in the data operations pipeline, they do not ensure that each step is executed and coordinated as a single, integrated, and accurate process or help people and teams better collaborate. DataOps orchestrates your end-to-end multi-tool, multi-team, multi-process, multi-environment pipelines—from data access to value delivery to promote efficiency, increase quality, and reduce organizational complexity.